Well it depends on how fast Alpha and Beta are. If Number starts out as 5, what will Alpha and Beta return if Alpha and Beta are run in parallel? You also have another function, Beta, that wants to divide Number by two and read it. Imagine you have one function, Alpha, that wants to take a Number, add 1 to it, and read it.

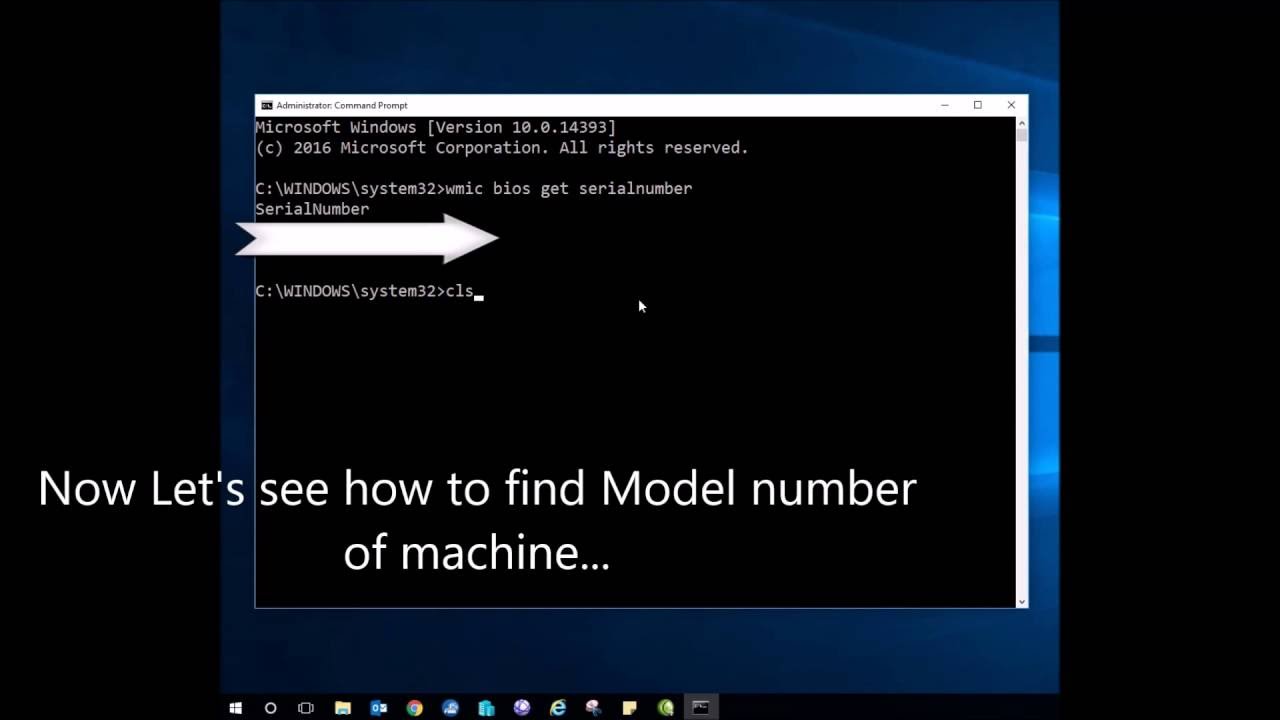

#HOW TO GET CORE NUMBER OF YOUR COMPUTER CODE#

The biggest problem with code executed in multiple places is that you can get all sorts of accidents when multiple things want access to the same thing. However, code across multiple threads can still be faster than single-threaded code, because the CPU can context-switch away from code that is waiting on something (e.g., API call, file read) to a thread that can be processing right away. Thus single-core computing is not truly paralell, since only one given thread will be executing a time. Within each core, the computer will constantly context-switch, going from thread to thread, executing each thread a bit at a time before switching to the next thread. Each core (aka "CPU") is essentially it's own individual computer with its own processes. In addition to having multiple threads and processes, your computer may have multiple cores. These threads can then be used to run multiple parts of the same program.įorking creates an entirely new process that resembles the old process and can be used to run another part of the same program. However, they can spawn additional threads through threading. Processes usually start out with just one thread. How is that? Processes, Threads, and Coresįirst we have to discuss processes, threads, and cores.Ī process is an executing instance that provides everything needed to execute a program (e.g., address space, open handles to files, environment variables.)Ī thread is a subset of a process that shares the resources of a process but can execute code indepedently. Paraellism on Your Computerīut it's also possible to have parallel code on just one computer.

This is also an example of parallelization, since the code is running both on the second API-connected computer and on your computer. This makes code harder to reason about and handle because you don't know when the API call will return or what your code will be like when it returns, but it makes your code faster because you don't have to wait around for the API call to take place.

But asynchronous code can simply keep on going and then the API call returns later. Normally, code then has to simply wait for the other computer to give it a response over the API. The idea here is that many times you do things like send a call to another computer, perhaps over the internet, using an API. The first example of this is asynchronous code. We can often make the exact same code go much faster through parallelization, which is simply running different parts of the computer code simaltaneously. If one part of your code takes a long time to run, the rest of your code won't run until that part is finished. Frequently, these statements are executed one at a time.

#HOW TO GET CORE NUMBER OF YOUR COMPUTER SERIES#

Computer code is a series of executed statements.